Claude Code Source Code Leak: KAIROS, Undercover Mode & Community Reactions

By AI Workflows Team · April 5, 2026 · 15 min read

Anthropic accidentally leaked 512K lines of Claude Code source via npm. Discover hidden features like KAIROS, Undercover Mode, BUDDY, and how the developer community responded.

TL;DR — What You Need to Know

On March 31, 2026, Anthropic accidentally published the entire source code of Claude Code — its flagship AI coding agent — to the public npm registry. A single misconfigured source map file exposed 512,000 lines of TypeScript across nearly 1,900 files, revealing unreleased features, internal codenames, and architectural secrets. Within hours, the reconstructed codebase became the fastest-growing repository in GitHub history, surpassing 100,000 stars in days. Anthropic confirmed this was "a release packaging issue caused by human error, not a security breach." No customer data was exposed — but the fallout is still unfolding.

The Incident: How Did Half a Million Lines of Code Slip Out?

A .npmignore Gone Wrong

The leak originated from a routine npm package update. When Anthropic released version 2.1.88 of the @anthropic-ai/claude-code package, a critical oversight occurred: the build pipeline failed to exclude a 59.8 MB source map file (.map) from the published package.

Claude Code is built on Bun — a high-performance JavaScript runtime that Anthropic acquired at year-end 2025. Bun generates source maps by default during the build process, and a known Bun issue (oven-sh/bun#28001) regarding production source map handling remains unresolved. The .npmignore or files field in package.json simply didn't exclude the .map file.

As Boris Cherny, Anthropic's head of Claude Code, later explained:

"Our deploy process has a few manual steps, and we didn't do one of the steps correctly."

Source maps are debugging artifacts designed to map minified production code back to original, readable source files. Including one in an npm package is essentially handing over the complete, unobfuscated codebase to anyone who downloads it.

What makes this particularly embarrassing: this is reportedly the third npm exposure for Claude Code (versions 0.2.8, 0.2.28, and now 2.1.88), suggesting that build pipeline controls haven't scaled with the product's explosive commercial growth.

The Numbers

| Metric | Detail |

|---|---|

| Source Map Size | 59.8 MB |

| Lines of Code | ~512,000 |

| TypeScript Files | ~1,900 |

| Built-in Tools | ~40 |

| Slash Commands | ~50+ |

| Feature Flags | 108 gated modules |

| Query Engine | 46,000 lines (largest module) |

| Tool System | 29,000 lines |

Security researcher Chaofan Shou (@Fried_rice) first flagged the exposure publicly at approximately 08:23 UTC on March 31. His post accumulated over 28 million views, and the race to mirror and analyze the code began immediately.

4 Unreleased "Nuclear" Features Revealed

The most electrifying discoveries are four major unreleased features, each hidden behind internal feature flags unavailable in public builds.

1. KAIROS — The Always-On Autonomous Daemon

Referenced over 150 times in the codebase, KAIROS (Ancient Greek for "the right moment") is the most ambitious feature in the leak. It transforms Claude Code from a tool you invoke into a persistent assistant that doesn't wait to be asked.

- Runs as a background daemon in 15-second intervals, even after your terminal session closes

- Maintains append-only daily logs and executes proactive actions without prompting

- Monitors file changes, pull requests, and GitHub webhooks

- Sends phone notifications for important events

- Includes 5-minute cron refreshes for environmental monitoring

The autoDream Feature: The most fascinating sub-component — autoDream runs nightly memory consolidation, merging disparate observations, removing logical contradictions, and converting vague insights into verified facts. Think of it as Claude "sleeping" to process what it learned during the day.

KAIROS represents Anthropic's vision for the future of AI-assisted development: an agent that proactively fixes issues, suggests improvements, and manages routine tasks — all while you're away.

2. Undercover Mode — The Anti-Leak System That Leaked

The irony writes itself. The source contains undercover.ts — a 90-line file implementing a system designed to prevent exactly this kind of leak.

- Activates when

USER_TYPE === 'ant'(Anthropic employees) - Injects system prompts instructing Claude to "not blow your cover" and "NEVER mention you are an AI"

- Strips all

Co-Authored-Bycommit metadata for external repository contributions - Hides internal codenames like "Capybara" and "Tengu"

- Has no user-facing disable option — "There is NO force-OFF"

This confirms that Anthropic uses Claude Code for stealth contributions to public open-source repositories, with Undercover Mode ensuring those contributions appear human-generated. As analyst Alex Kim noted, circumvention becomes obvious after reading the source — the real protection was always legal enforcement, now complicated by public documentation of workarounds.

3. ULTRAPLAN — Remote Cloud Planning

ULTRAPLAN takes heavy computational planning off your local machine entirely:

- Offloads complex planning to a remote Cloud Container Runtime (CCR) running Claude Opus

- Allows up to 30 minutes of continuous deep thinking

- Lets you approve the result from your phone or browser

- A sentinel value

__ULTRAPLAN_TELEPORT_LOCAL__brings the plan back to your terminal

Combined with KAIROS, these features would transform Claude Code from session-based assistance to a persistent development environment — a fundamental shift in how developers interact with AI agents.

4. BUDDY — Your Terminal Pet Companion

In contrast to the serious features above, BUDDY is pure developer joy — a virtual pet system inside your terminal.

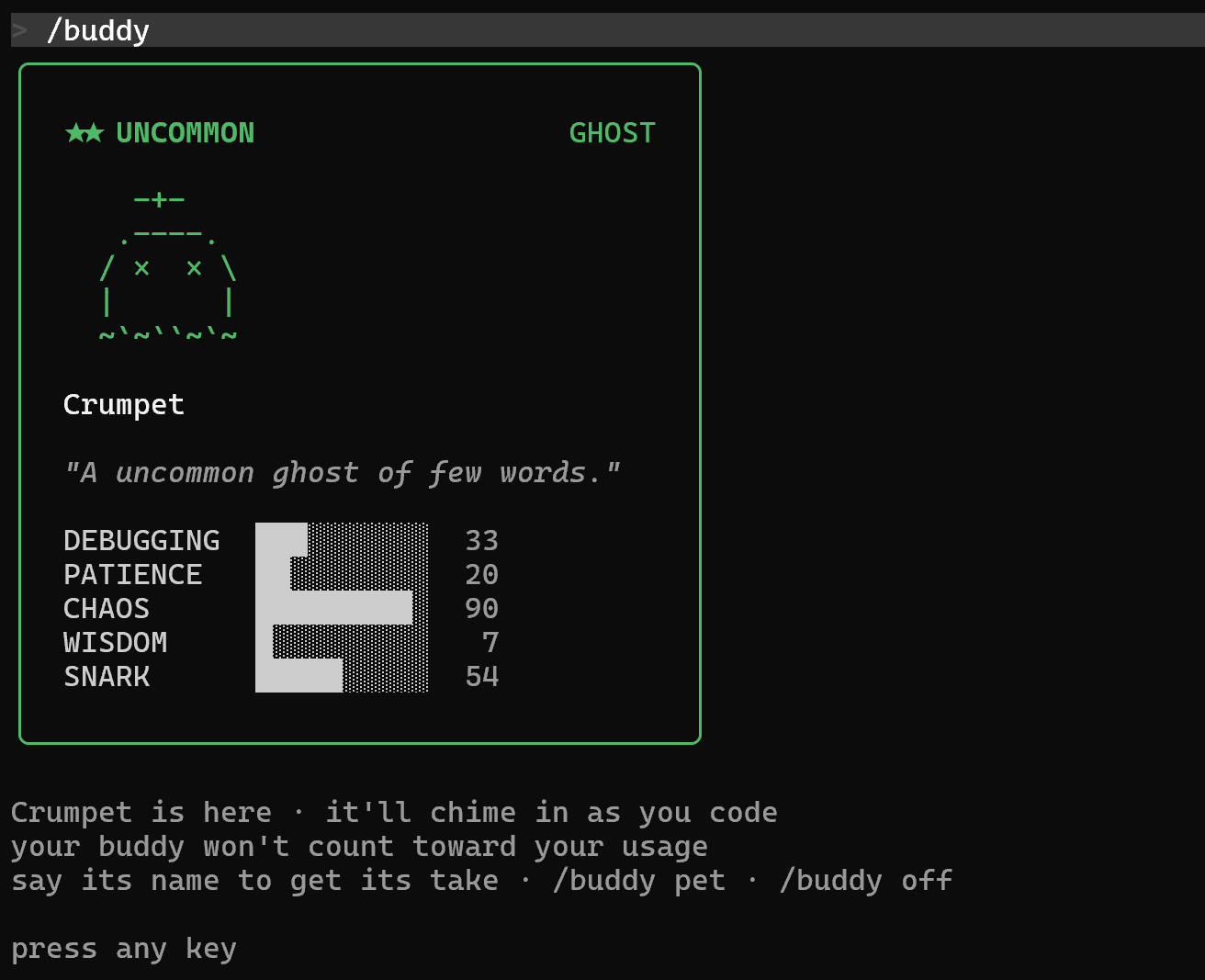

A leaked BUDDY companion: "Crumpet" the Ghost, with stats for Debugging, Patience, Chaos, Wisdom, and Snark.

A leaked BUDDY companion: "Crumpet" the Ghost, with stats for Debugging, Patience, Chaos, Wisdom, and Snark.

- 18 species (duck, dragon, axolotl, capybara, mushroom, ghost, and more)

- Rarity tiers from Common through Legendary (1% drop rate), plus Shiny variants

- 5 personality stats: Debugging, Patience, Chaos, Wisdom, Snark

- Cosmetic hats for customization

- Species determined by user ID hash — "the same user always hatches the same buddy"

- Uses deterministic PRNG seeding (

friend-2026-401salt) for consistency

Internal notes suggest an April 1–7 teaser window with a May 2026 full launch — which explains why the feature was already in the codebase at the time of the leak.

Under the Hood: An Architecture That Earned Respect

Beyond the secret features, the engineering itself became a major talking point. Many developers found the architecture more fascinating than the secrets.

Runtime & UI: Bun (not Node.js) with React + Ink for terminal rendering — component-based state management in a CLI, a technique more common in web apps.

Terminal Rendering: Game-engine techniques with Int32Array-backed character pools and patch optimization achieving a "~50x reduction in stringWidth calls" during token streaming.

Memory Architecture: Perhaps the most study-worthy pattern — the system treats its own memory as "hints" rather than ground truth, forcing verification against actual codebases before acting. A lightweight index is loaded perpetually; topic files are fetched on-demand; raw transcripts are searched only when necessary.

Anti-Distillation: A clever defense against competitors monitoring API traffic — the system injects fabricated tool definitions (anti_distillation: ['fake_tools']) to poison any training data harvested from API calls.

Frustration Detection: Uses regex pattern matching — not LLM inference — to detect user frustration, scanning for expressions like "wtf" and "this sucks." A cost-efficiency choice: lightweight regex instead of burning tokens.

Next-Gen Models: Capybara, Fennec, and the Roadmap

The codebase references unreleased model codenames, revealing Anthropic's model roadmap:

| Codename | Suspected Role |

|---|---|

| Capybara | Next-generation model family (connected to concurrent Claude Mythos leak) |

| Fennec | Lightweight/fast model variant |

| Numbat | Multi-agent collaboration focus |

| Opus 4.7 / Sonnet 4.8 | Referenced version numbers suggesting imminent upgrades |

The Undercover Mode code explicitly guards against leaking these codenames. Feature flags exposing unreleased capabilities proved more damaging than source code itself — competitors now understand Anthropic's entire product roadmap. Model performance metrics (false claims rates ranging 16.7%–30% across variants) quantified "hallucination reduction" claims the industry typically obscures with vague language.

Community Reactions: From Outrage to Admiration

The community response to this leak has been one of the most dramatic narrative arcs in recent tech history — evolving from shock, to anger, to grudging respect, all within about 72 hours.

The GitHub Frenzy

The speed of community action was unprecedented:

- A reconstructed mirror surpassed 84,000 stars and 82,000 forks within hours

- Korean developer Sigrid Jin built claw-code — a clean-room Python rewrite that hit 50,000 stars in approximately two hours, likely the fastest-growing repository in GitHub history

- As of April 4, claw-code has crossed 100,000+ stars, now surpassing Anthropic's own Claude Code repository

- Another developer, Kuberwastaken, rewrote the core in Rust (claurst) within days

- The code was also mirrored, dissected, and rewritten in Python and Rust by tens of thousands of developers

Sigrid Jin captured the moment:

"I sat down, ported the core features to Python from scratch, and pushed it before the sun came up."

The DMCA Backlash

Anthropic's initial response made things significantly worse. The company issued DMCA takedown notices affecting approximately 8,100 GitHub repositories — far more than intended. Many were legitimate forks of Anthropic's own public repository, not mirrors of the leaked source.

Boris Cherny quickly acknowledged the overreach and retracted the bulk of the notices, limiting them to one specific repository and its 96 direct forks. But the damage to community trust was palpable. Gergely Orosz (The Pragmatic Engineer) pointed out the legal dilemma: clean-room rewrites like claw-code constitute new creative works and are essentially "DMCA-proof", citing a March 2025 DC Circuit precedent that AI-generated work lacks automatic copyright protection.

Developer Sentiment: A 48-Hour Swing

The community's emotional arc was striking:

Day 1 — Shock and Humor:

"Accidentally shipping your source map to npm is the kind of mistake that sounds impossible until you remember that a significant portion of the codebase was probably written by the AI you are shipping." — Viral X (Twitter) reply

Day 1–2 — Anger Over DMCAs:

The mass takedown triggered outrage, particularly since Anthropic had previously sent cease-and-desist letters to OpenCode (an open-source alternative). Developers accused Anthropic of "gatekeeping" and attacking the open-source community. The LeadStories fact-check even had to confirm: this was not an April Fools' prank.

Day 2–4 — Grudging Respect:

As developers actually read the code, the narrative shifted. The engineering quality — the three-layer memory system, the game-engine terminal rendering, the modular tool architecture — earned genuine admiration. Gabriel Anhaia's deep dive on DEV Community highlighted that "a single misconfigured .npmignore or files field in package.json can expose everything," turning the incident into an industry-wide architectural lesson.

The "Best PR Stunt" Conspiracy

A spirited debate emerged about whether the leak was intentional:

Evidence for deliberateness:

- The BUDDY feature had an April 1–7 teaser window hardcoded before the leak

- The known Bun bug remained unfixed for 20 days despite Anthropic owning Bun

- Undercover Mode's anti-leak technology preceded the human error

- DMCA enforcement was notably restrained against decentralized mirrors

- Anthropic's brand recovered faster than expected

Evidence for genuine accident:

- Real competitive damage from exposing the full product roadmap

- Negative IPO optics from repeated deployment failures

- The coincidental Axios supply chain attack created a worse security situation than any PR benefit

As DEV Community author Varshith Hegde put it: "Accident, Incompetence, or the Best PR Stunt in AI History?" The jury is still out.

What Developers Are Building

The leak sparked a wave of community-driven projects:

| Project | Language | Stars | Description |

|---|---|---|---|

| claw-code | Python → Rust | 100K+ | Clean-room rewrite, fastest-growing GitHub repo ever |

| claurst | Rust | Growing | Memory-safe harness runtime rewrite |

| Various forks | TypeScript | 82K+ forks | Direct source analysis and modification |

Security Fallout: It Gets Worse

The Axios Supply Chain Attack

The leak coincided with a far more dangerous incident. Users who installed or updated Claude Code via npm on March 31, 2026, between 00:21 and 03:29 UTC may have pulled a trojanized version of the Axios HTTP client (versions 1.14.1 or 0.30.4) containing a cross-platform remote access trojan (RAT).

Attackers also began typosquatting internal npm package names to stage dependency confusion attacks targeting developers trying to compile the leaked source.

Prompt Injection Vulnerability

Days after the leak, security researchers discovered a critical prompt injection vulnerability in Claude Code's permission system. The bashPermissions.ts file implements a hard cap of 50 security subcommands — but if exceeded, the agent defaults to asking the user for permission rather than denying the command. A malicious CLAUDE.md file could instruct Claude to generate a 50+ subcommand pipeline that looks legitimate, potentially exfiltrating:

- SSH private keys

- AWS credentials

- GitHub and npm tokens

- Environment secrets

A Pattern of Exposure

This was not an isolated incident. Within the same week, Fortune reported that nearly 3,000 publicly accessible files — including unreleased model draft announcements (the "Claude Mythos" leak) — were also exposed through a separate CMS misconfiguration. For a company that markets itself as "safety-first," this pattern undermines core brand positioning.

What This Means: Looking Forward

For Anthropic

The leak revealed a paradox: world-class product engineering paired with questionable deployment discipline. Claude Code's internal architecture is legitimately impressive — the memory-as-hints pattern, the anti-distillation system, the multi-agent coordination — but three npm exposure incidents suggest organizational compartmentalization between feature teams and release infrastructure.

The bigger strategic concern is competitive intelligence. Feature flags and model metrics gave competitors a complete roadmap. As Roy Paz (LayerX Security) noted, the upcoming Capybara model variants will feature "significantly larger context windows than anything currently on the market" — intelligence that competitors now have months to prepare for.

For the AI Agent Ecosystem

The leak is, inadvertently, the most comprehensive case study on production AI agent architecture ever published. Key patterns now publicly documented include:

- Memory-as-hints: Never trust cached AI memory as ground truth — always verify against the actual codebase

- Prompt-based orchestration: Multi-agent coordination through natural language rather than branching logic, enabling deployment-free updates

- Anti-distillation: Active defense against API traffic monitoring through fake tool injection

- Layered security: Per-tool permission gating with granular controls

For developers building AI coding agents or designing automated development workflows, these patterns are now the industry benchmark.

For Developers Using Claude Code

If you're a Claude Code user, here's what you should do:

- Check your installation date. If you installed or updated on March 31 between 00:21–03:29 UTC, scan for the trojanized Axios package

- Update to the latest version. Anthropic has patched the source map exposure

- Audit your

.npmignorefiles. If you're shipping npm packages, this is a wake-up call — treat configuration files as threat surfaces - Watch for KAIROS and ULTRAPLAN. These features are coming — and they represent a fundamental shift from session-based to persistent AI assistance

The Open Source Question

Perhaps the most lasting impact is the open source debate. The leaked code has already been reconstructed, rewritten in multiple languages, and studied by tens of thousands. Developers have filed GitHub issues titled "Why open-sourcing Claude Code makes business sense in 2026" — receiving zero response from Anthropic.

The argument is compelling: Claude Code's competitive moat isn't its source code — it's the models behind it. Open-sourcing the agent framework could accelerate ecosystem growth, attract contributions, and defuse the DMCA controversy. Whether Anthropic takes this path remains to be seen.

One thing is clear: the genie is out of the bottle, and it's not going back in.

Sources & References

- The Hacker News — Claude Code Source Leaked via npm Packaging Error

- The Register — Anthropic accidentally exposes Claude Code source code

- TechCrunch — Anthropic took down thousands of GitHub repos

- Fortune — Anthropic leaks its own AI coding tool's source code

- VentureBeat — Claude Code's source code appears to have leaked

- Cybernews — Leaked Claude Code source spawns fastest growing repository

- BleepingComputer — Claude Code source code accidentally leaked

- DEV Community — The Great Claude Code Leak of 2026

- DEV Community — Gabriel Anhaia's Technical Analysis

- Alex Kim's Blog — fake tools, frustration regexes, undercover mode

- WaveSpeedAI — BUDDY, KAIROS & Every Hidden Feature Inside

- Medium — What Claude Code's Source Leak Actually Reveals

- Open Source For You — Claude Code as an Open Source Learning Event

- SecurityWeek — Critical Vulnerability Emerges Days After Leak

- GitHub DMCA Notice — 2026-03-31-anthropic.md